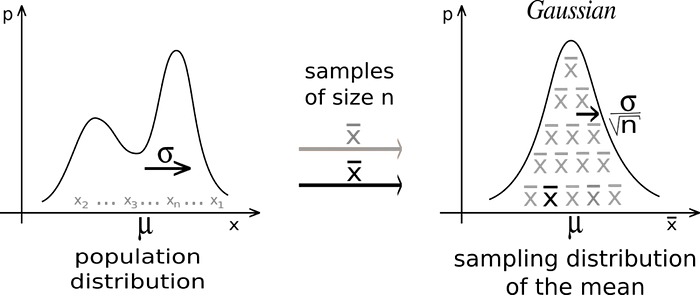

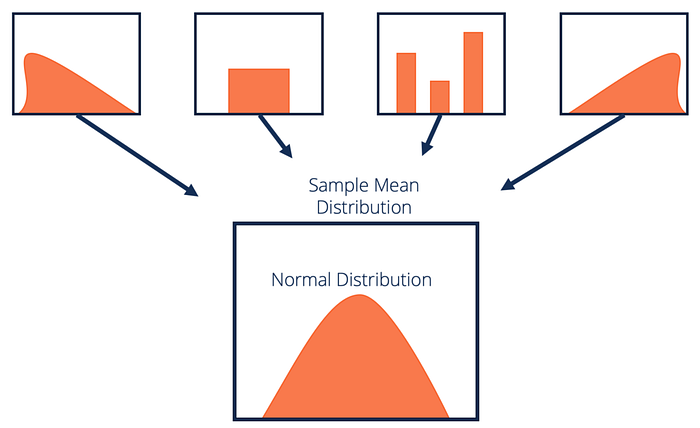

Central Limit Theorem is one of the most fundamental theorems in Statistics. The theorem states that if we have a population and we take a lot of large sized random samples from the population, the means of the samples will approximate normal distribution, regardless of the distribution of the population itself.

Before we expand any more on the theorem, let us understand some of the terminology used above.

Population : In statistics we often want to study about the characteristics of a population. A population is a set of all the elements in a group or all the outcomes possible in an experiment. For example if we are doing a study on the voting preferences of Americans, the population will consists of all the citizens of USA. Or if we are trying to find the average of all outcomes of a roll of a fair dice , the population will consist of all the outcomes of rolling the dice infinite times.

Most often studying the whole population is hard , time-consuming and involves lots of resources. So instead we rely on sampling.

Sampling : Sampling involves taking subsets of the population , which are scaled down representations of the population on the whole , and combining the information obtained from these samples to draw conclusions about the population as a whole.

This is how most studies in inferential statistics are carried out, by taking representative samples of decent size and using them to derive inferences about the population . Not all samples though, are equally useful.

A good sample must be:

- Representative of the whole population.

- Big enough to draw conclusions (thumb rule here is ≥30).

- Picked at random so as not to be biased towards any particular segment of the population.

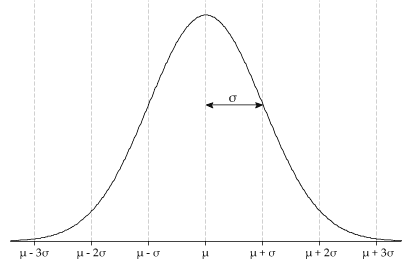

Normal Distribution : When your population is spread perfectly symmetrical with σ standard deviations around the mean value μ, you get the following bell-shaped curve:

This is called a Normal Distribution or a Gaussian Distribution with a mean μ and standard deviation σ.

Coming back to the theorem now, if we draw samples , of size n each from a population, then the mean of each sample is an estimate of the population mean itself. According to Central limit theorem, these sample means are normally distributed with a mean μ and standard deviation σ²/n.

Using Central Limit theorem, we can obtain a fairly good estimate of the population mean and standard deviation even if we do not know about the distribution of the population itself. And as the sample size n increases , the sample standard deviation σ²/n, converges around μ . This means that for large enough sample size, the sample mean is quite close to population mean .

So why is Central Limit Theorem so important ? It is so, as it tells us that the sample means drawn from a population are normally distributed , no matter what is the underlying distribution of the population. Using this normal distribution , we can easily test out our ideas and hypothesis about the population , even though we know nothing about the distribution of the population itself.

Thanks for reading. Please feel free to add any comments or ask any questions and I will try my best to answer them.